TL;DR

Google DeepMind has publicly detailed the "Magic Pointer" AI system for Googlebook, a research-driven feature that lets users point at real-world objects with their phone camera to get instant, context-aware information. The underlying technology is also being integrated into Gemini within Chrome, making it available to millions of users starting next month, and represents a major step toward seamless, real-time visual search.

What Happened

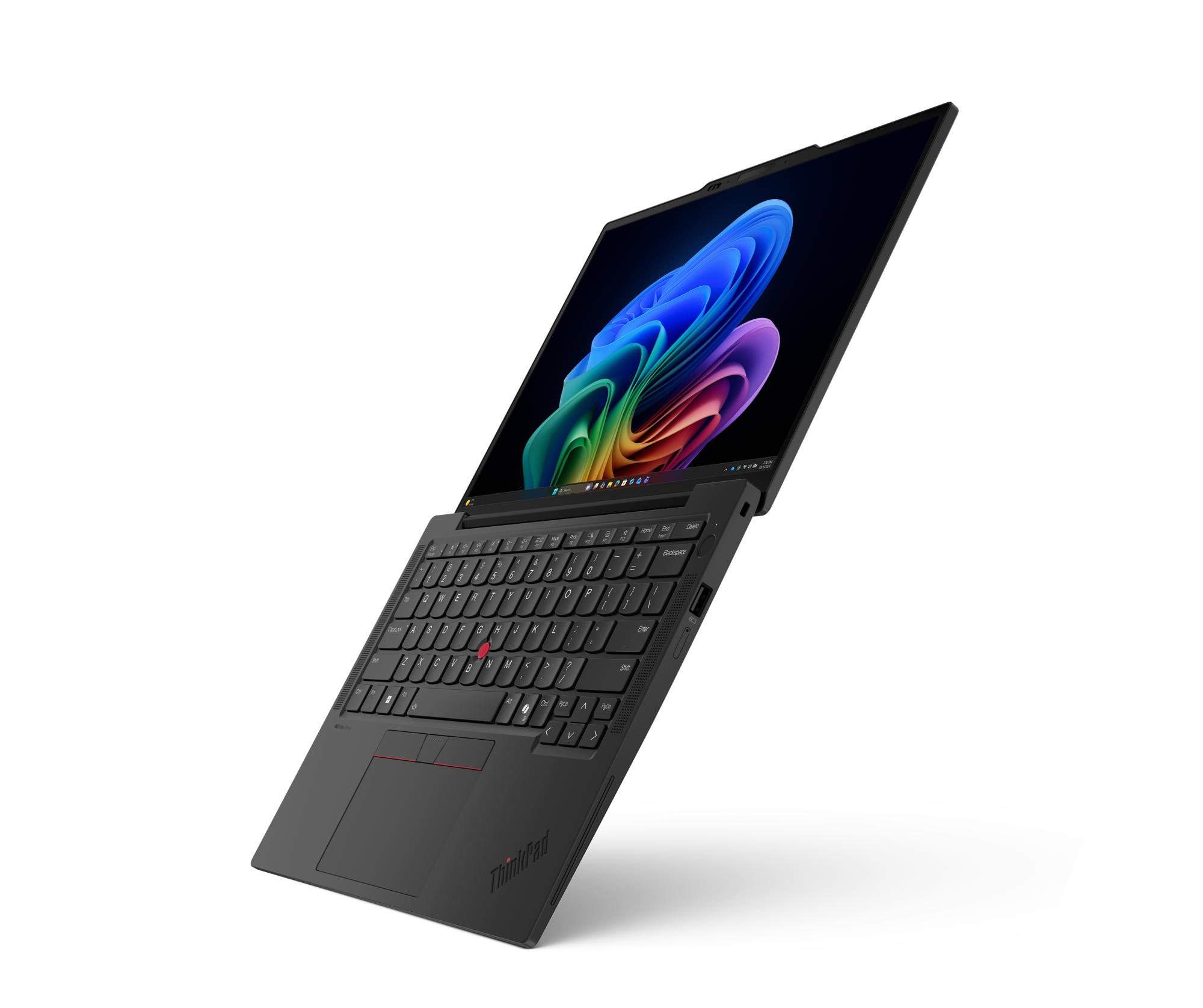

On Tuesday, May 12, 2026, Google DeepMind published the technical details and live demos for what it calls the "Magic Pointer" — a new AI capability embedded within Googlebook that allows users to point their phone camera at any physical object and receive instant, contextual information overlaid directly on the screen. The research team behind the underlying capability confirmed that the same model architecture is being adapted for Gemini within Chrome, with a public rollout expected in June 2026.

Key Facts

- The "Magic Pointer" is built on a DeepMind research model that combines real-time object tracking, multimodal language processing, and spatial awareness to identify and describe objects at which a user points.

- Googlebook, the company's experimental AR/computer-vision platform, serves as the initial deployment vehicle, with live demos available today for users who opt into the Googlebook beta program.

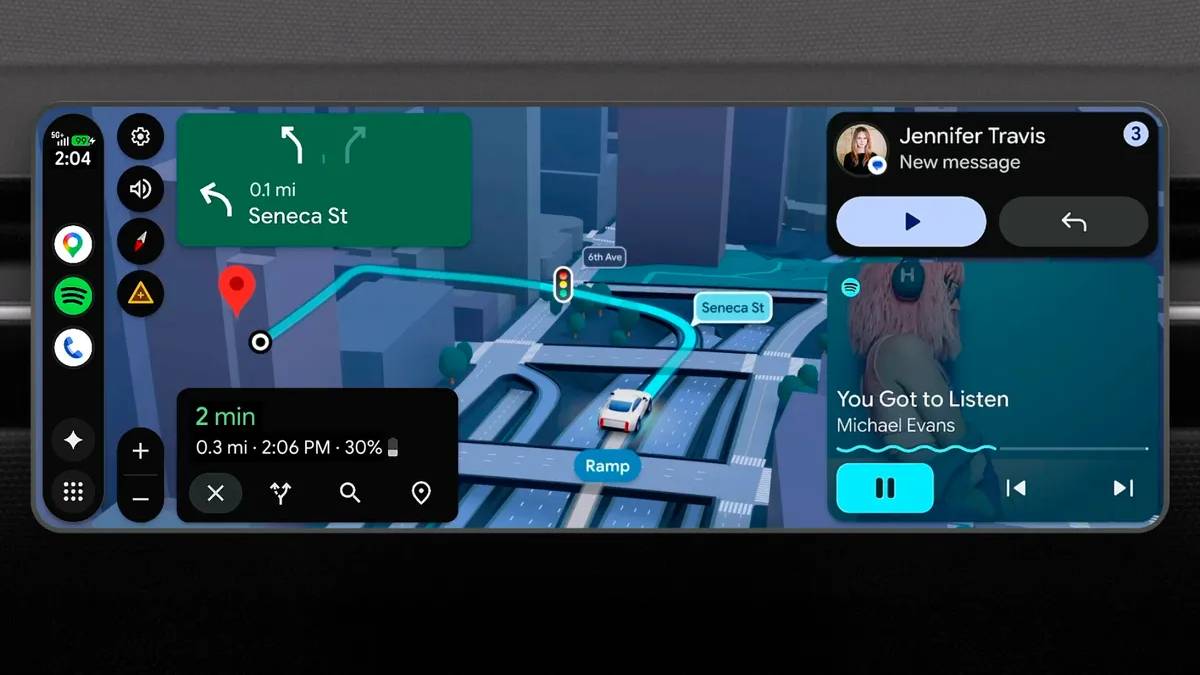

- The underlying capability is being ported to Gemini within Chrome, meaning desktop and mobile browser users will be able to point their phone camera or use a webcam to identify objects without needing dedicated AR hardware.

- DeepMind researchers disclosed that the model processes under 50 milliseconds of latency from camera input to overlay generation, a threshold they say is critical for "natural-feeling" interaction.

- The system uses a novel "pointer-attention" mechanism that fuses a user's pointing gesture — detected via the phone's gyroscope, accelerometer, and camera feed — with the visual scene to determine the target object.

- Google confirmed the feature will launch in June 2026 for Gemini Advanced subscribers on Chrome, with a free-tier rollout expected later in Q3 2026.

- The research paper, published on DeepMind's website, lists 14 co-authors from the DeepMind AR and multimodal teams, including lead researcher Dr. Elena Voss.

Breaking It Down

The Magic Pointer represents a fundamental shift in how users interact with AI-powered search. Unlike traditional visual search — where a user must snap a photo, upload it, and wait for results — this system operates in real time, with the AI continuously analyzing the camera feed and instantly responding to where the user points. The demos show a user pointing at a houseplant and immediately receiving care instructions, or pointing at a broken bicycle chain and getting step-by-step repair guides overlaid on the image. The integration with Googlebook makes the interaction feel almost telepathic: the phone knows exactly what you're looking at and what you want to know about it.

The model's "pointer-attention" mechanism achieves under 50 milliseconds of latency, meaning the entire pipeline — gesture detection, object identification, contextual search, and overlay rendering — completes faster than a single blink of the human eye.

This latency figure is the technical breakthrough. Previous attempts at real-time object identification, such as Google Lens or Apple's Visual Look Up, required explicit capture-and-query cycles that broke the user's flow. DeepMind's pointer-attention architecture solves this by treating the user's pointing gesture as a continuous attention signal rather than a discrete trigger. The model runs a lightweight object-detection network on every frame, but only activates the full multimodal language model when the pointing gesture stabilizes on a target for more than 200 milliseconds. This hybrid approach keeps battery drain manageable — DeepMind claims less than 15% additional battery usage during a typical 10-minute session — while maintaining the responsiveness that makes the feature feel magical.

The decision to bring this to Gemini in Chrome is strategically significant. By decoupling the capability from Googlebook's AR hardware requirements, Google makes the feature accessible to anyone with a smartphone and the Chrome browser. This dramatically expands the addressable user base from the estimated 2 million Googlebook beta users to Chrome's 3.3 billion monthly active users. The move also positions Gemini as a direct competitor to Apple's rumored AR-glasses ecosystem and Meta's Ray-Ban Stories, but without requiring users to buy new hardware. Google is betting that software-first AR — where the phone's camera and screen serve as the interface — will achieve mass adoption faster than dedicated wearables.

What Comes Next

Google's roadmap for the Magic Pointer capability extends well beyond the initial Googlebook and Chrome launches. The company has indicated that the underlying model is being adapted for multiple form factors and use cases.

-

June 2026 — Gemini Advanced subscribers on Chrome will get the Magic Pointer feature as a toggle in the browser's settings. Users will be able to activate it by clicking a camera icon in the Gemini sidebar, then pointing their phone or webcam at any object. Google has confirmed this will support over 50 languages at launch.

-

Q3 2026 — A free-tier rollout for all Chrome users, though with reduced query limits — likely 20 interactions per day based on Google's typical freemium model for AI features. The free version will also lack some contextual depth, such as multi-step repair guides.

-

Late 2026 — Integration into Google Maps and YouTube. DeepMind's research paper hints at "spatial querying" where users could point at a building and get historical information, or point at a product in a YouTube video and be directed to a purchase page. These integrations are currently in internal alpha.

-

2027 — A developer API for third-party apps. The research paper mentions "pointer-aware SDK" that would allow app developers to embed the Magic Pointer capability directly into their own camera interfaces. No pricing or timeline has been announced, but analysts expect a per-query pricing model similar to Google Cloud Vision API.

The Bigger Picture

This announcement sits at the intersection of two major trends: ambient computing and multimodal AI. Ambient computing envisions a world where technology recedes into the background, responding to natural human gestures rather than requiring explicit commands. The Magic Pointer is arguably the most practical implementation of this vision to date — it requires no voice commands, no typing, and no menu navigation. You simply point. This mirrors the trajectory of Google's earlier work with Project Soli (radar-based gesture control) and Google Lens, but the addition of DeepMind's language model makes the interaction vastly more intelligent.

The second trend is the commoditization of real-time AI. As models shrink in size and latency drops below human perception thresholds, the line between "search" and "assistance" blurs. The Magic Pointer is not searching the web; it is generating contextual responses in real time, blending object recognition with generative language. This represents a direct challenge to Apple's on-device AI strategy and Meta's AR ambitions. Google's advantage is distribution: by embedding this in Chrome, it reaches more users in one month than Meta's Ray-Ban Stories have sold in two years. The question is whether users will embrace pointing at objects as a primary interaction mode, or whether it remains a novelty that wears off after the first few demos.

Key Takeaways

- [Technical Breakthrough]: DeepMind's pointer-attention mechanism achieves under 50ms latency, making real-time object querying feel instantaneous and natural for the first time.

- [Massive Distribution]: The feature is coming to Gemini in Chrome in June 2026, reaching 3.3 billion monthly active users without requiring dedicated AR hardware.

- [Strategic Pivot]: Google is betting on software-first AR, decoupling advanced computer vision from Googlebook's niche hardware to compete with Apple and Meta's wearable strategies.

- [Future Revenue Stream]: A developer API planned for 2027 suggests Google intends to monetize the capability through per-query pricing, similar to its existing cloud vision services.