TL;DR

Three leading AI assistants — Claude, ChatGPT, and Gemini — were each given the same prompt to build a working Chrome extension, and only one succeeded. The experiment reveals that even the most advanced large language models still struggle with consistent, production-ready code generation, a critical limitation as developers increasingly rely on AI for software development.

What Happened

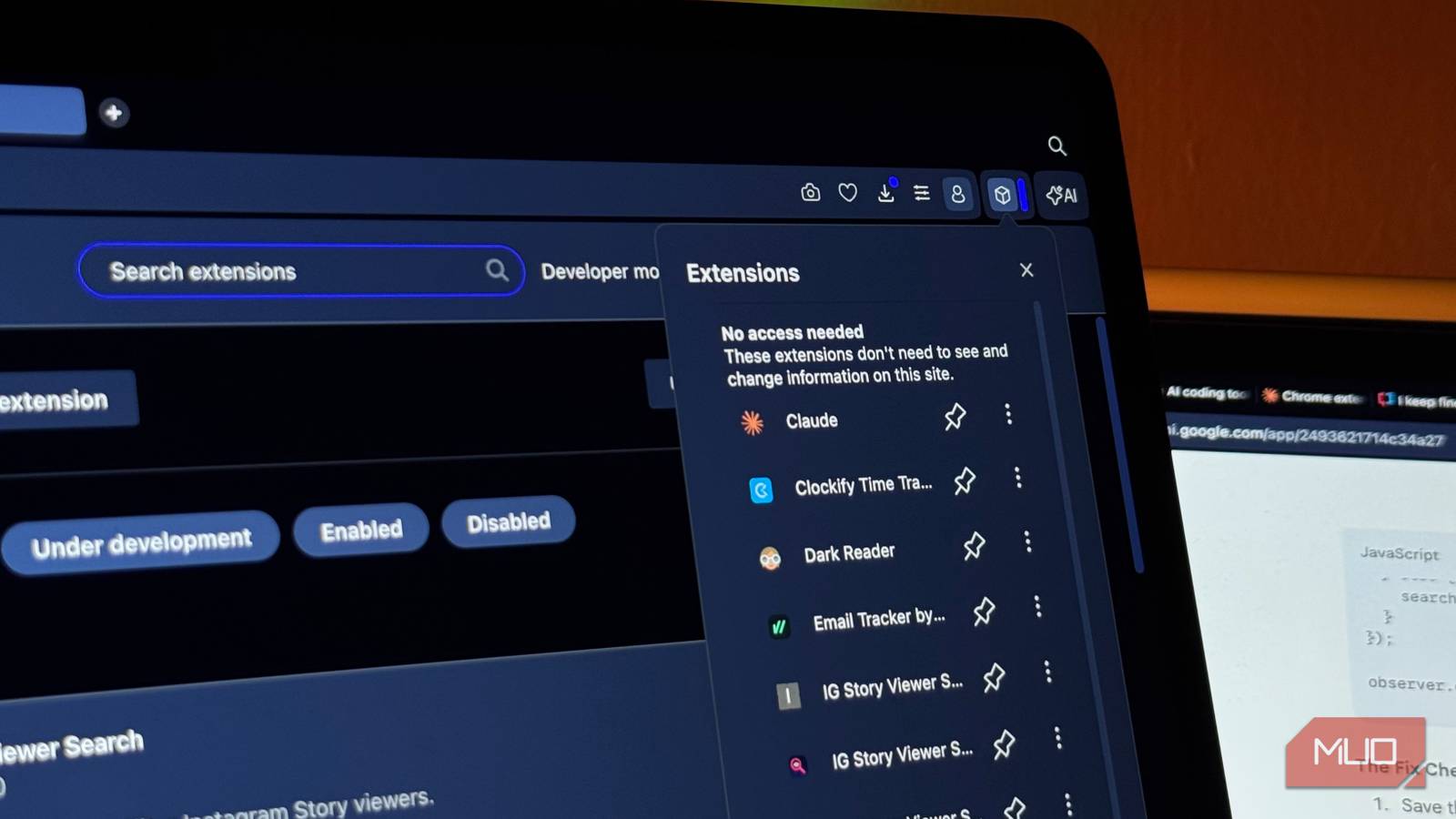

A MakeUseOf reporter sat down with three of the most advanced AI models on the market — Claude (Anthropic), ChatGPT (OpenAI), and Gemini (Google) — and gave each the exact same prompt: build a functional Chrome extension. Only one of the three produced code that actually worked.

Key Facts

- The experiment was conducted by MakeUseOf and published on Sunday, April 26, 2026.

- The three models tested were Claude (Anthropic), ChatGPT (OpenAI), and Gemini (Google).

- Each model received the identical prompt to build a Chrome extension with the same specifications.

- Only one of the three AI-generated extensions functioned correctly on the first attempt.

- The two failing models produced code that contained syntax errors, missing dependencies, or logic flaws that prevented the extension from loading or executing.

- The successful model's extension required no manual debugging or code edits before it could be installed and used in Google Chrome.

- This test highlights a persistent gap between AI's impressive conversational ability and its reliability for practical, executable software development tasks.

Breaking It Down

The core finding of this experiment is that AI code generation remains a high-variance activity. While all three models can fluently describe how to build a Chrome extension, and even produce plausible-looking code blocks, the gap between "looks right" and "actually runs" is still wide. For a task as structured and well-documented as building a browser extension — something with clear APIs, manifest files, and established patterns — a 33% success rate among top-tier models is striking.

Two out of three models failed to produce working code for a task that has thousands of public examples, official Google documentation, and a fixed specification.

This failure rate is especially notable because Chrome extension development is not an obscure or novel domain. The Chrome Extensions API has been stable for years, and countless tutorials, open-source projects, and Stack Overflow answers exist. The models are not being asked to invent a new algorithm or solve a novel problem — they are being asked to assemble well-known components in a standard way. That two of them could not do so suggests that their training data, while vast, does not translate into reliable execution for even moderately complex multi-file projects.

The disparity also reveals something about each model's architecture and training. The successful model likely handled the manifest.json file correctly, generated the correct background script with proper event listeners, and produced a popup HTML file with correctly linked JavaScript. The failing models may have hallucinated API functions, mismatched file names, or omitted critical boilerplate. These are not subtle errors — they are the kind of mistakes that a junior developer would catch in minutes, yet the AI systems confidently output them.

What Comes Next

- Model updates and benchmarks: OpenAI, Anthropic, and Google will likely release new versions in the coming months that claim improved code generation. Independent benchmarks like SWE-bench and HumanEval will need to include multi-file, execution-tested tasks like this one.

- Developer tool integration: Companies like GitHub (Copilot), Replit, and Cursor are racing to integrate AI code generation into their IDEs. The failure rate seen here suggests that human review will remain mandatory for AI-generated code in production environments for at least another 12–18 months.

- Specialised code models: Expect to see more domain-specific fine-tuned models (e.g., "Chrome Extension Builder") that are trained specifically on browser extension codebases, potentially achieving higher success rates than general-purpose LLMs.

- Regulatory and liability questions: As AI-generated code becomes more common, questions of liability for bugs, security vulnerabilities, or compliance failures will intensify. A 33% success rate on a simple task raises serious questions about fitness for use in critical software.

The Bigger Picture

This experiment is a microcosm of two larger trends in technology. First, the reliability ceiling of LLMs is becoming visible. While models have improved dramatically at natural language tasks, their performance on deterministic, executable tasks like code generation has plateaued. The gap between "sounds convincing" and "actually works" is the defining challenge for AI in software engineering. Second, the commoditisation of AI assistants means that users now have multiple options, but the differences between them are not trivial. A developer who chooses the wrong model for a task may waste hours debugging code that looks plausible but is fundamentally broken. The MakeUseOf test is a practical reminder that not all AI is equal, and that the hype around AI coding tools must be tempered with rigorous, independent testing.

Key Takeaways

- [33% success rate]: Only one of three leading AI models could build a working Chrome extension from a single prompt, indicating that AI code generation is far from reliable for production use.

- [Multi-file complexity is a weakness]: The failing models struggled with coordinating multiple files (manifest, background script, popup), a task that requires cross-file consistency that LLMs currently lack.

- [Human review remains essential]: Even the best AI code generators produce errors that a competent developer would catch immediately, meaning AI is a productivity aid, not a replacement for skilled programmers.

- [Model choice matters]: The wide variance in outcomes means developers must test and compare models for their specific use case, rather than assuming any leading AI assistant will suffice.