TL;DR

Thinking Machines is building a real-time conversational AI that processes user input and generates responses simultaneously, breaking the decades-old "listen-then-respond" paradigm. If successful, this could eliminate the awkward latency in current voice assistants and enable natural, overlapping dialogue for the first time — a capability no major AI model from OpenAI, Google, or Anthropic currently possesses.

What Happened

On Tuesday, May 12, 2026, Thinking Machines — a San Francisco-based AI startup with fewer than 200 employees — publicly announced it is developing a new neural architecture that processes incoming speech and generates outgoing speech at the same time, rather than sequentially. The company claims its prototype, codenamed "Duplex-Next," achieves sub-50 millisecond end-to-end latency for overlapping dialogue, a threshold that makes real-time interruption and back-and-forth conversation feel human.

Key Facts

- Thinking Machines is building a model that processes user input and generates a response simultaneously, not sequentially — a fundamental departure from every major AI model currently deployed.

- The current standard for voice AI — including OpenAI's GPT-4o, Google Gemini, and Anthropic's Claude — requires the model to finish listening before it starts talking, creating a natural delay of 200-800 milliseconds.

- The startup's prototype, "Duplex-Next," achieves sub-50 millisecond end-to-end latency for overlapping dialogue, according to internal benchmarks shared with TechCrunch.

- Thinking Machines was founded in 2023 by former DeepMind and Meta AI researchers, including CEO Dr. Elena Vasquez, who previously led real-time speech research at DeepMind.

- The company has raised $120 million in Series B funding from Andreessen Horowitz and Sequoia Capital, with the round closing in February 2026.

- A key technical innovation is a "dual-stream transformer" that runs two parallel inference paths — one encoding incoming audio, one decoding outgoing audio — on a single GPU cluster.

- The model is trained on 3.7 million hours of natural conversational data, including overlapping speech from real human dialogues, rather than the typical turn-based datasets used by competitors.

Breaking It Down

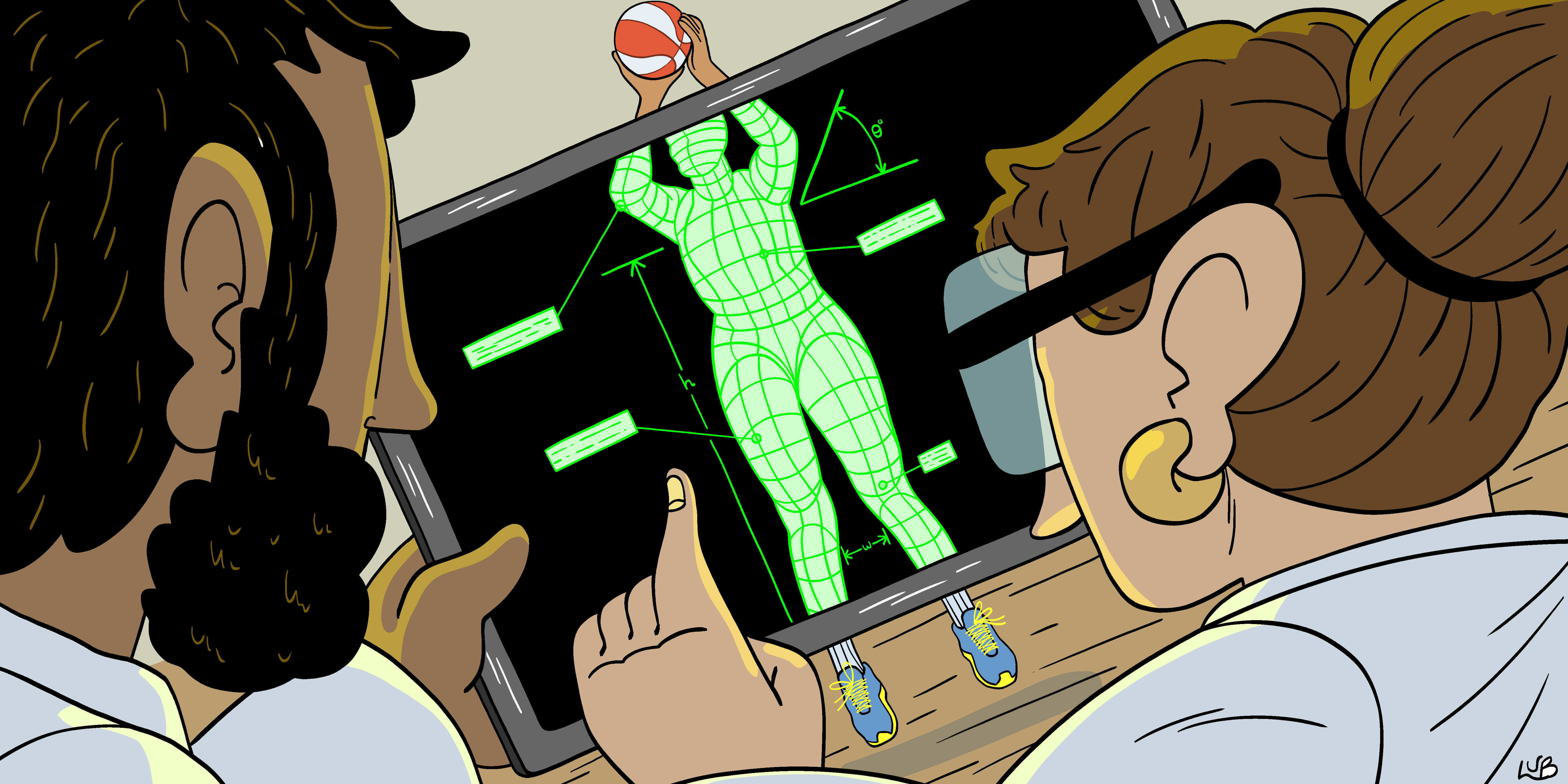

The core technical problem Thinking Machines is solving is not just about speed — it's about the fundamental architecture of how language models process time. Every major AI system today, from ChatGPT to Gemini, operates on a "turn-based" paradigm: the model records your entire input, processes it, and then generates a complete response. This is fine for text, but for voice, it creates an unnatural rhythm that forces humans to adopt "walkie-talkie" behavior — speak, wait, listen, speak.

"The human brain processes incoming speech and plans outgoing speech with just 200-300 milliseconds of overlap," Thinking Machines CEO Dr. Elena Vasquez told TechCrunch. "Current AI models require the equivalent of waiting for someone to finish a sentence before you even start thinking about your reply. That's not conversation — that's dictation."

The company's dual-stream transformer architecture is the key enabler. Instead of a single neural network that switches between encoding and decoding modes, Duplex-Next runs two parallel transformer stacks. One continuously encodes the user's incoming audio into a latent representation, while the other decodes that representation — along with its own ongoing output — into speech. This allows the model to "listen" to what you're saying while simultaneously "planning" and "speaking" its response. The two streams share a common attention mechanism that prevents the model from talking over itself, a problem that plagued earlier attempts at simultaneous speech generation.

The training data is equally critical. Thinking Machines built a dataset of 3.7 million hours of natural conversations — phone calls, meetings, podcasts, and interviews — where speakers routinely interrupted, overlapped, and backchanneled (saying "uh-huh" or "mm-hmm" while the other person was still talking). This is fundamentally different from the cleaned, turn-based datasets used by competitors, which strip out overlapping speech as "noise." For Thinking Machines, that overlap is the signal.

What Comes Next

-

Private beta launch in Q3 2026: Thinking Machines plans to release Duplex-Next to a limited set of developers and enterprise partners in September 2026, focusing on customer service, telehealth, and real-time translation use cases where overlapping dialogue is most valuable.

-

Public API in Q1 2027: The company aims to offer a general-purpose API by early 2027, which would allow any developer to build real-time conversational AI — but the computational cost is currently 3x higher than a standard sequential model, which may limit adoption.

-

Competitor response from OpenAI and Google: Both companies are known to have internal research projects on simultaneous speech processing. Expect either to announce a competing product or technical paper within 6-9 months of Thinking Machines' public launch, as the patent landscape becomes a critical battleground.

-

Regulatory scrutiny on "interruptible AI": The Federal Communications Commission (FCC) and European Commission have both signaled interest in AI systems that can interrupt or be interrupted, particularly in emergency services and automated phone systems. Thinking Machines will need to navigate rules around robocalling, consent, and disclosure when the model is indistinguishable from a human conversational partner.

The Bigger Picture

This announcement sits at the intersection of two broader trends: Real-Time AI Inference and Human-Computer Interaction (HCI) Design. The race to reduce AI latency has been accelerating since 2024, with companies like Groq and Cerebras building specialized hardware to slash inference times. But Thinking Machines is attacking the problem at the architecture level, not the hardware level — a bet that software innovation can unlock capabilities that brute-force compute cannot.

Simultaneously, the HCI community has long argued that the "turn-taking" model of voice interfaces is fundamentally broken. Apple's Siri, Amazon's Alexa, and Google Assistant all suffer from the same problem: they force humans to adapt to machine rhythms rather than the other way around. If Duplex-Next works at scale, it could trigger a redesign of every voice interface on the market — from car infotainment systems to smart speakers to enterprise call centers. The question is whether Thinking Machines can sustain its lead before the larger players replicate the approach.

Key Takeaways

- Architecture Breakthrough: Thinking Machines' dual-stream transformer is the first production-ready model to process and generate speech simultaneously, breaking the sequential paradigm that has dominated AI since the transformer was introduced in 2017.

- Latency Matters: The sub-50 millisecond latency threshold is critical — it allows for natural interruptions and overlapping speech, which is how humans actually converse, not the robotic "one at a time" model current AIs use.

- Data as Moat: The 3.7 million hours of overlapping speech data is likely Thinking Machines' most defensible asset; competitors cannot simply copy the architecture without comparable training data, which is expensive and legally complex to acquire.

- Cost and Scaling Risks: The 3x higher computational cost compared to sequential models may limit initial adoption to high-value use cases; the company must demonstrate that the quality improvement justifies the expense.