TL;DR

By 2026, voice-first interfaces are poised to replace keyboards and mice as the primary mode of workplace interaction, with companies like OpenAI, Microsoft, and Google racing to embed conversational AI into every desk. This shift matters now because it will fundamentally alter office layouts, privacy norms, and productivity metrics, forcing employers to redesign physical spaces for a whisper-filled, always-listening environment.

What Happened

The quiet hum of keyboards is giving way to a new sound in the modern office: the low murmur of professionals speaking to their computers. TechCrunch reported on May 10, 2026, that the rise of advanced voice AI — from OpenAI’s GPT-5 Voice Mode to Microsoft Copilot’s real-time dictation — is driving a fundamental redesign of how and where knowledge workers do their jobs. The shift is not just about software; it is about the physical office itself, with acoustic panels, privacy pods, and whisper-optimized microphones becoming the new standard equipment.

Key Facts

- OpenAI launched GPT-5 Voice Mode in March 2026, capable of sustaining hour-long, context-aware conversations with zero latency, directly competing with Google’s Gemini Voice and Amazon’s Alexa for Business.

- Microsoft reported that 42% of its Microsoft 365 enterprise users now use voice commands for at least one core task daily, up from 12% in 2023.

- Gartner forecasts that by 2028, 30% of all enterprise software interactions will be voice-driven, up from under 5% in 2024.

- Steelcase, the world’s largest office furniture manufacturer, launched a new product line in April 2026 called “Sonic Works” — desks with built-in directional microphones and sound-dampening partitions designed specifically for voice-first workflows.

- A 2025 Harvard Business Review study found that workers using voice dictation for email and document creation were 23% faster on average, but reported 34% higher cognitive fatigue after two hours of continuous use.

- WeWork announced in January 2026 that it is retrofitting 70% of its co-working spaces with “whisper zones” — soundproofed booths optimized for low-volume speech recognition.

- Apple is reportedly developing a “Silent Mode” for its Siri Pro enterprise offering, using bone-conduction microphones that allow users to speak without producing audible sound.

Breaking It Down

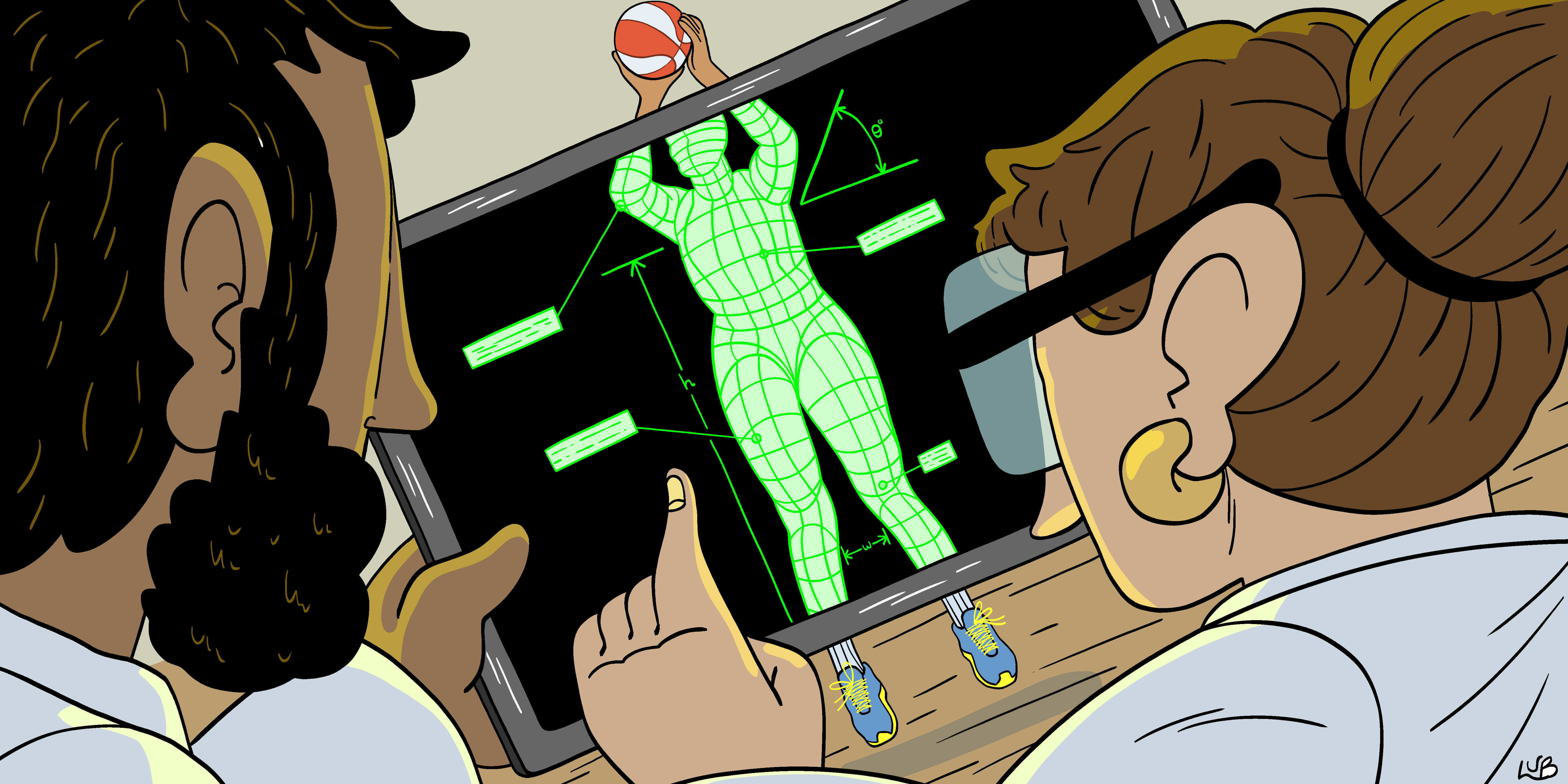

The core driver of this transformation is not merely better speech recognition — it is the arrival of conversational AI that can understand context, intent, and nuance. Older voice-to-text systems required users to speak in stilted, punctuated phrases (“Dictation: new email. Subject: quarterly results. Full stop.”). The GPT-5 and Gemini generation can follow natural conversation, handle interruptions, and even infer missing information. This makes voice a viable primary interface for complex tasks like data analysis, code debugging, and strategic planning — not just simple dictation.

“34% higher cognitive fatigue after two hours of continuous voice use” — Harvard Business Review, 2025. This statistic exposes the hidden cost of voice-first work. While speaking is faster than typing for most people, it demands constant vocal cord engagement, auditory processing, and the suppression of environmental noise. The result is a paradox: workers get more done in less time, but they burn out faster. This is driving demand for asynchronous voice tools — systems that let users record voice messages or commands that are processed later, reducing the pressure of real-time interaction.

The physical office is being reshaped around this new reality. Steelcase’s Sonic Works line is a direct response to the acoustic nightmare of 50 workers all talking to their computers simultaneously in an open-plan office. Traditional cubicles and open desks are being replaced by “voice zones” — areas with different acoustic profiles. “Loud zones” allow full-volume dictation; “whisper zones” are for low-voice commands; “silent zones” ban voice input entirely. WeWork is betting that this tiered approach will become the standard, as companies realize that a one-size-fits-all voice environment is unworkable.

Privacy is the most contentious issue. A voice-first office means every conversation with a computer is potentially audible to colleagues, and every command is recorded on a server. Apple’s bone-conduction Siri Pro is one technical fix — it reads sub-vocalizations, allowing silent commands. But such technology raises its own concerns: if a device can hear your unspoken thoughts, where is the line of consent? EU regulators are already investigating whether always-listening workplace AI violates GDPR’s restrictions on passive data collection, with a ruling expected by September 2026.

What Comes Next

The next 18 months will determine whether voice-first becomes the default or remains a niche tool. Key developments to watch:

- September 2026: The European Data Protection Board is expected to issue binding guidance on workplace voice AI, potentially requiring explicit opt-in for all voice data collection. This could force Microsoft and Google to redesign their enterprise voice features for EU customers.

- Q4 2026: OpenAI is rumored to release GPT-5 Voice Mode Pro, a version specifically optimized for noisy environments, with real-time background noise cancellation and speaker identification. Pricing and availability will be critical.

- January 2027: Steelcase and Herman Miller are both expected to announce new office furniture lines that integrate Amazon Alexa for Business and Google Gemini directly into desks, eliminating the need for separate microphones or speakers.

- March 2027: The first major corporate office redesign based entirely on voice-first principles is scheduled to open in Austin, Texas, built by Meta for its Reality Labs division. The design will feature zero traditional desks, replacing them with “conversation stations” designed for spoken interaction.

The Bigger Picture

This story is a collision of two larger trends: The Post-Keyboard Interface and The Acoustic Economy. The first trend — the move away from typing toward speech, gesture, and gaze — has been brewing for a decade, but generative AI has provided the missing piece: a machine that can hold a real conversation. The second trend is less discussed but equally important: as voice becomes a primary input, office design becomes an acoustic engineering problem. Companies that once spent millions on ergonomic chairs will now spend millions on acoustic panels and directional microphones.

The broader implication is that productivity itself is being redefined. For 40 years, a productive worker was someone who typed fast and quietly. In the voice-first office, a productive worker is someone who can speak clearly, think out loud, and tolerate the cacophony of colleagues doing the same. This will favor different personality types and cognitive styles, potentially exacerbating the introvert-extrovert divide in the workplace. The quiet, focused programmer may find themselves at a disadvantage against the fast-talking, voice-driven collaborator.

Key Takeaways

- [Voice AI is now viable for complex work]: GPT-5 Voice Mode and Gemini Voice have crossed the threshold from gimmick to tool, enabling real-time, context-aware conversations that replace typing for many tasks.

- [Cognitive fatigue is the hidden barrier]: A 34% increase in mental exhaustion after two hours of voice use (per Harvard Business Review) means adoption will require new work patterns, not just new software.

- [Office design must be rethought]: Acoustic zoning, soundproof pods, and built-in microphones are becoming standard, as open-plan offices cannot handle 50 simultaneous voice users.

- [Privacy regulation will shape the market]: EU guidance in September 2026 on always-listening AI could mandate opt-in consent, forcing companies to choose between compliance and functionality.