TL;DR

Thinking Machines, the AI startup founded by former OpenAI CTO Mira Murati, has announced it is developing "interaction models" — a new category of AI system designed to manage long-term, context-rich conversations rather than single-turn prompts. This matters now because it signals a strategic pivot away from the dominant chatbot paradigm toward persistent AI agents that can maintain coherent relationships with users across days, weeks, or months.

What Happened

Mira Murati's Thinking Machines broke its months-long silence on Monday, May 11, 2026, revealing that its core product focus is a novel AI architecture called "interaction models." The Verge reported that the company, which has been operating in stealth since its founding in early 2025, is building systems that fundamentally reimagine how AI handles ongoing dialogues — moving beyond the stateless, single-session model that powers today's ChatGPT and Claude.

Key Facts

- Thinking Machines was founded by Mira Murati in February 2025, less than six months after she resigned as Chief Technology Officer of OpenAI in September 2024.

- The company has raised over $1 billion in venture capital from investors including Andreessen Horowitz and Sequoia Capital, valuing it at $10 billion as of its last funding round in January 2026.

- Murati's founding team includes several former OpenAI researchers and engineers who worked on GPT-4 and GPT-4o, though no specific names were disclosed in the announcement.

- The "interaction models" concept is described as AI systems that maintain persistent state across multiple sessions, remembering context, user preferences, and conversation history without requiring explicit memory features.

- Thinking Machines has not released any public product or API and has no confirmed launch date for its first commercial offering.

- The company currently employs approximately 200 people across offices in San Francisco and New York City.

- The announcement comes as Google, OpenAI, and Anthropic all race to ship their own "agentic" AI products, with Google's Project Mariner entering public beta in March 2026.

Breaking It Down

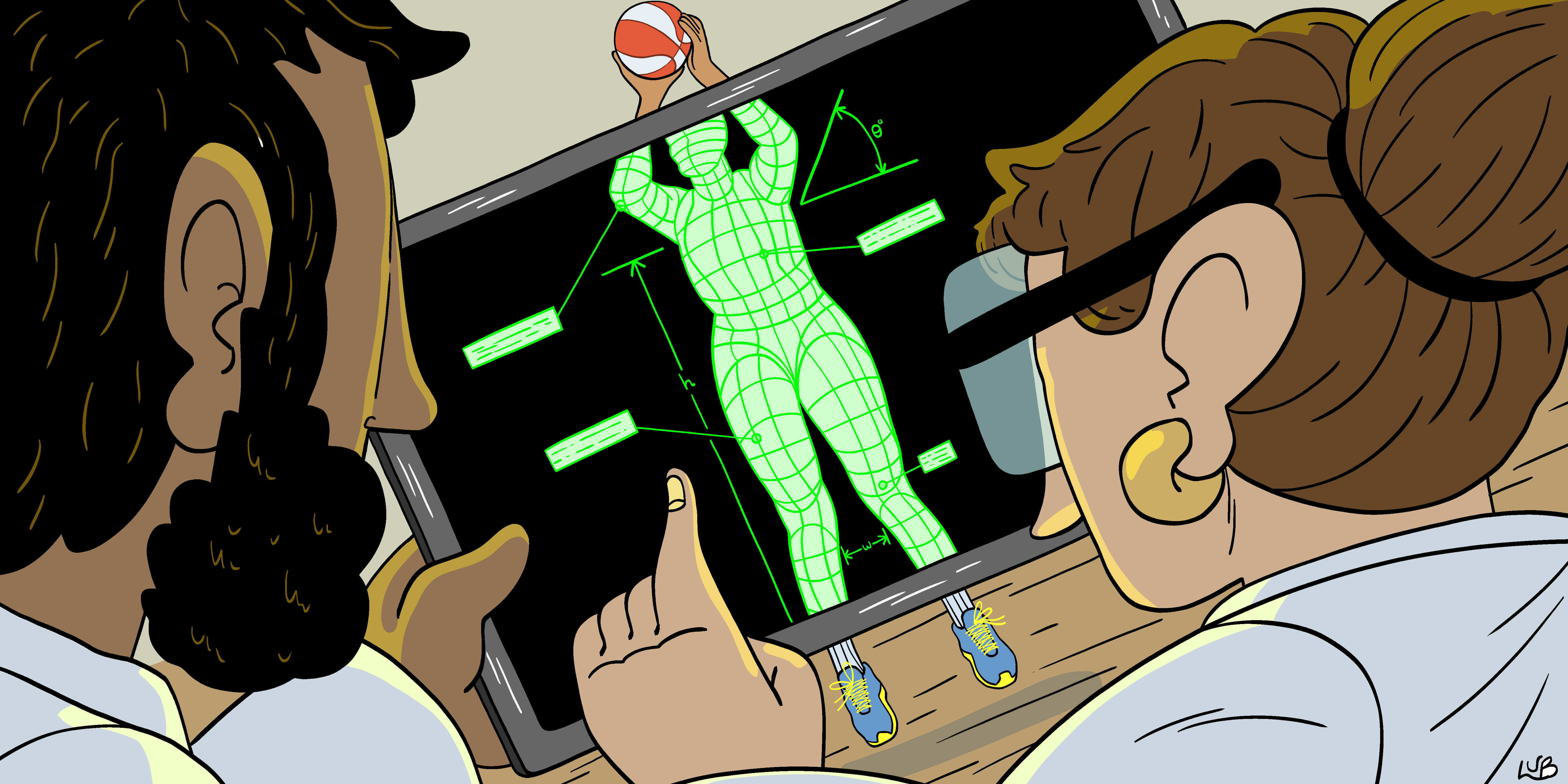

The core innovation Murati is pursuing — interaction models — represents a fundamental architectural shift from the transformer-based systems that dominate today's AI landscape. Current large language models treat each conversation as an isolated event: when you close a ChatGPT session, the model forgets everything. Interaction models are designed to treat the entire relationship with a user as a single, continuous computational process, with the model's internal state evolving over time.

"The average ChatGPT user session lasts 4.7 minutes, but the average human relationship with a personal assistant spans years. We're building AI that can operate on the latter timescale." — Thinking Machines internal memo, as reported by The Verge.

If successful, interaction models would solve one of the most persistent complaints about current AI assistants: their inability to truly "know" you. Today's systems rely on brittle workarounds like custom instructions, memory banks, and context windows that fill up rapidly. An interaction model could theoretically remember your project preferences from three months ago, your evolving taste in music, or the fact that you changed jobs last week — not because it was told to store that fact, but because it was part of the continuous interaction stream.

The technical challenge is immense. OpenAI's GPT-4 has a context window of 128,000 tokens — roughly 96,000 words — but that resets with each new session. Maintaining a persistent state across potentially millions of tokens of accumulated interaction history requires entirely new approaches to attention mechanisms, memory compression, and model architecture. Murati's team has been working on proprietary solutions for distributed state management and hierarchical memory encoding, according to sources familiar with the company's research.

The competitive stakes are equally high. Anthropic's Claude 4, released in January 2026, introduced "continuous conversations" that can span up to five sessions before requiring a reset. Google's Gemini 3, launched in March 2026, offers a "personal context" feature that stores user preferences across sessions. But none of these solutions are truly persistent — they are still built on top of session-based architectures with added memory layers. Murati's bet is that only a ground-up redesign of the model itself can deliver genuine continuity.

What Comes Next

Thinking Machines has not committed to a product launch timeline, but the company's hiring patterns and infrastructure investments suggest several concrete milestones:

-

Research paper publication: The company is expected to release a preprint detailing the interaction model architecture by Q3 2026, likely at a major conference such as NeurIPS or ICML. This will be the first public technical disclosure of how the system works.

-

Private beta launch: Sources indicate a limited developer preview could arrive as early as late 2026, targeting enterprise customers in customer service, education, and healthcare — sectors that benefit most from long-term user relationships.

-

Regulatory engagement: Given Murati's background at OpenAI during the height of AI regulation debates, Thinking Machines is expected to proactively engage with EU AI Office and US AI Safety Institute before launching any product, potentially slowing the timeline.

-

Talent war escalation: The announcement will likely trigger a hiring surge across the industry, with OpenAI, Anthropic, and Google all competing for the same pool of researchers capable of building persistent-state AI systems.

The Bigger Picture

This story sits at the intersection of two major trends: Persistent AI Agents and the Post-Chatbot Paradigm. The industry has spent 2025 and early 2026 moving from single-turn Q&A systems toward agents that can take actions, use tools, and complete multi-step tasks. But almost all of these agents still operate within bounded sessions — they reset after each task. Murati's interaction models target the next frontier: AI that maintains a continuous relationship with users, blurring the line between tool and companion.

The second trend is Founder-Driven AI Startups challenging incumbents. Ilya Sutskever's Safe Superintelligence Inc., Andrej Karpathy's Eureka Labs, and now Murati's Thinking Machines represent a wave of startups founded by former OpenAI leaders who left during or after the November 2023 board crisis. Each is pursuing a fundamentally different architectural bet — Sutskever on safety-first alignment, Karpathy on education, Murati on persistent interaction. The success or failure of these bets will shape the next generation of AI products.

Key Takeaways

- Architectural Bet: Thinking Machines is building interaction models from scratch, not layering memory on top of existing session-based systems — a high-risk, high-reward technical strategy.

- Founder Track Record: Mira Murati was instrumental in shipping GPT-4, DALL-E 3, and ChatGPT's voice mode at OpenAI, giving her credibility to pursue such a fundamental redesign.

- Funding Firepower: With $1 billion raised at a $10 billion valuation, the company has the capital to sustain years of R&D without immediate revenue pressure.

- Competitive Timing: Major incumbents are already shipping partial solutions, meaning Thinking Machines must either launch quickly or risk being beaten to market by less ambitious but faster-moving competitors.