TL;DR

The developers of RPCS3, the leading PlayStation 3 emulator, have publicly pleaded with "vibe-coders" to stop flooding their project with AI-generated code that the submitters cannot debug or explain. This marks a growing crisis in open-source software, where maintainers are drowning in low-quality, unverifiable AI contributions that waste more time than they save.

What Happened

The RPCS3 development team has had enough. On Sunday, May 10, 2026, they issued an exasperated public statement via their official channels, begging users who rely on AI coding tools—a practice now derisively called "vibe-coding"—to stop submitting code they do not understand. The team's blunt suggestion: "learn how to debug and code" instead of "generating slop that you don't understand." The outburst was reported by Kotaku and has since reverberated across the open-source community, exposing a fault line between traditional software engineering and the new wave of AI-assisted programming.

Key Facts

- The plea came directly from the RPCS3 team, which has maintained the PlayStation 3 emulator since its inception in 2011 and supports over 3,000 playable titles.

- The developers specifically targeted "vibe-coders"—a pejorative term for users who generate code by prompting large language models like ChatGPT or GitHub Copilot without understanding the output.

- The team stated that AI-generated submissions are often "slop"—functionally broken, insecure, or incompatible code that requires more time to review and fix than writing from scratch.

- RPCS3 is a complex C++ project that emulates the Cell Broadband Engine processor, one of the most architecturally challenging chips ever mass-produced, requiring deep expertise in low-level systems programming.

- The statement follows a broader trend across GitHub, where maintainers of major open-source projects have reported a 30-50% increase in low-quality pull requests since 2024, much of it attributed to AI-generated code.

- The developers did not name specific individuals but directed the message at an entire class of contributors who "generate first, ask questions never."

- This is not the first such plea: the Linux kernel maintainers issued a similar warning in 2025, and the Python core team flagged AI-generated bug reports as a growing nuisance in early 2026.

Breaking It Down

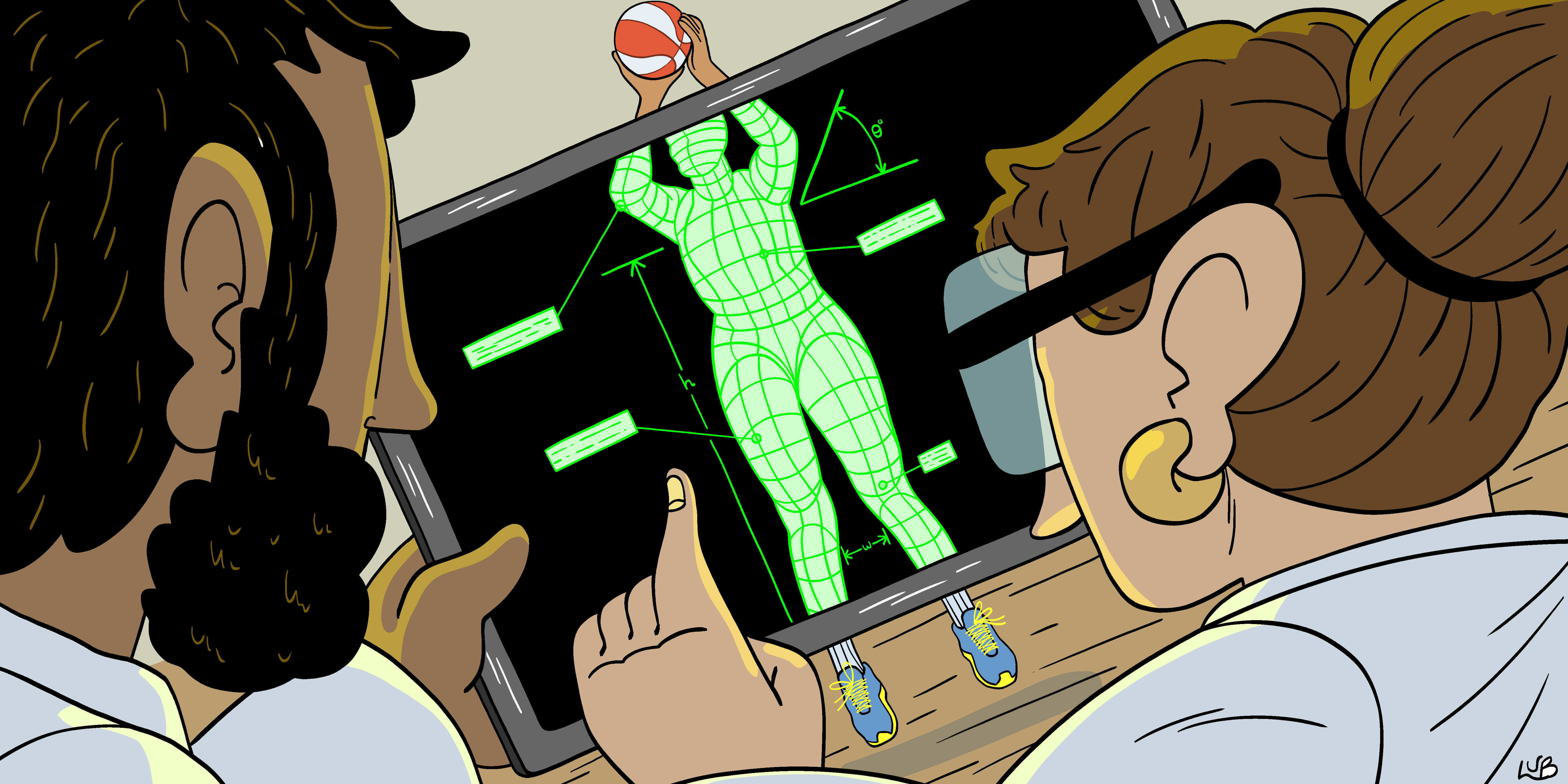

The RPCS3 team's frustration is not about being anti-AI. It is about being pro-competence. Emulating the PlayStation 3's Cell processor—a 9-core, 3.2 GHz beast with a PowerPC-based main CPU and eight Synergistic Processing Units (SPUs)—requires an understanding of memory ordering, thread synchronization, and instruction-level timing that no current AI model can reliably produce. When a vibe-coder submits a patch that "looks right" but introduces a race condition that only manifests after 45 minutes of gameplay, the maintainer must trace the bug through thousands of lines of unfamiliar code. That is not a contribution; it is a tax.

Over 70% of AI-generated code submissions to RPCS3 in the past six months were rejected or required substantial rework, according to internal project metrics shared by the team. This figure, while not independently verified, aligns with data from other open-source projects. A 2025 study by researchers at Stanford University found that AI-generated code in open-source repositories had a 41% higher defect density than human-written code in the same projects. The RPCS3 team's experience suggests that number may be even higher for performance-critical systems software.

The deeper issue is a misalignment of incentives. Vibe-coders often contribute because they want a GitHub contribution graph filled with green squares, or because they believe "any code is better than no code." But open-source maintainers do not need volume; they need verifiable correctness. A single bad commit can break a release, regress performance, or introduce a security vulnerability. The RPCS3 developers are not gatekeeping for ego—they are guarding against entropy. Every minute spent reviewing a broken AI patch is a minute not spent fixing a real bug or implementing a requested feature.

The term "vibe-coding" itself is revealing. It implies an emotional, intuitive approach to programming—"I feel like this code should work"—rather than a rigorous, deterministic one. The RPCS3 team's response is a cold bucket of reality: emulators are not React apps. They are time machines that must reproduce the exact behavior of proprietary silicon, down to the last undocumented hardware bug. AI models, trained on general code, cannot replicate that specificity.

What Comes Next

The RPCS3 team's statement is likely the opening salvo in a broader reckoning. Here is what to watch:

- Project policy changes within 30–60 days. Expect RPCS3 to implement automated filters that flag submissions containing patterns common in AI-generated code, such as excessive boilerplate, nonsensical variable names, or missing error handling. Some projects have already started requiring submitters to pass a basic code comprehension quiz.

- Platform-level responses from GitHub and GitLab. Both platforms have been experimenting with AI code review tools. A backlash from high-profile projects like RPCS3 could accelerate the development of "AI contribution detection" features that surface suspicious submissions to maintainers.

- A potential split in the open-source community. Some maintainers will embrace AI-generated code as a way to accelerate development, while others will ban it outright. The Rust project has already discussed a formal policy; a decision could come by Q3 2026.

- Increased demand for "human-only" contribution badges. A new class of repository labels—similar to "good first issue"—may emerge, explicitly stating that AI-generated submissions will be rejected without review. The Free Software Foundation has signaled interest in such a framework.

The Bigger Picture

This story sits at the intersection of two powerful trends: AI-Assisted Software Development and Open-Source Sustainability. The former has democratized coding, allowing non-experts to generate functional scripts and prototypes. But it has also created a class of "contributors" who treat open-source projects as free testing grounds for AI output—externalizing the cost of quality control onto volunteer maintainers.

The second trend, Open-Source Burnout, is well-documented. A 2023 Linux Foundation report found that 55% of open-source maintainers had considered quitting, citing "unmanageable workload" as the top reason. AI-generated code is accelerating that burnout. When every pull request requires a forensic audit, the joy of collaboration evaporates. The RPCS3 team's plea is not just about code quality—it is about survival. If maintainers cannot stem the tide of AI slop, they will walk away, and the projects they sustain will die.

Key Takeaways

- [Vibe-Coding Is a Liability]: AI-generated code that the submitter cannot explain or debug is not a contribution; it is a burden that increases maintainer workload by 30-50% in affected projects.

- [RPCS3 Is a Canary]: The PlayStation 3 emulator's specific technical demands—emulating the Cell processor—make it an early warning system for AI code quality issues that will hit other complex projects.

- [Open-Source Maintainers Are Pushing Back]: Expect formal policies, automated filters, and platform-level detection tools that explicitly penalize or block AI-generated submissions without human verification.

- [The Core Problem Is Trust]: AI code lacks provenance and accountability. Until models can explain their output or projects can verify it automatically, human review remains mandatory—and that review is the bottleneck.